Enterprise AI is moving past the novelty phase. The next fight will not be won by the company with the cleverest prompt or the loudest model announcement. It will be won by the company that can turn model intelligence into controlled, repeatable, observable work.

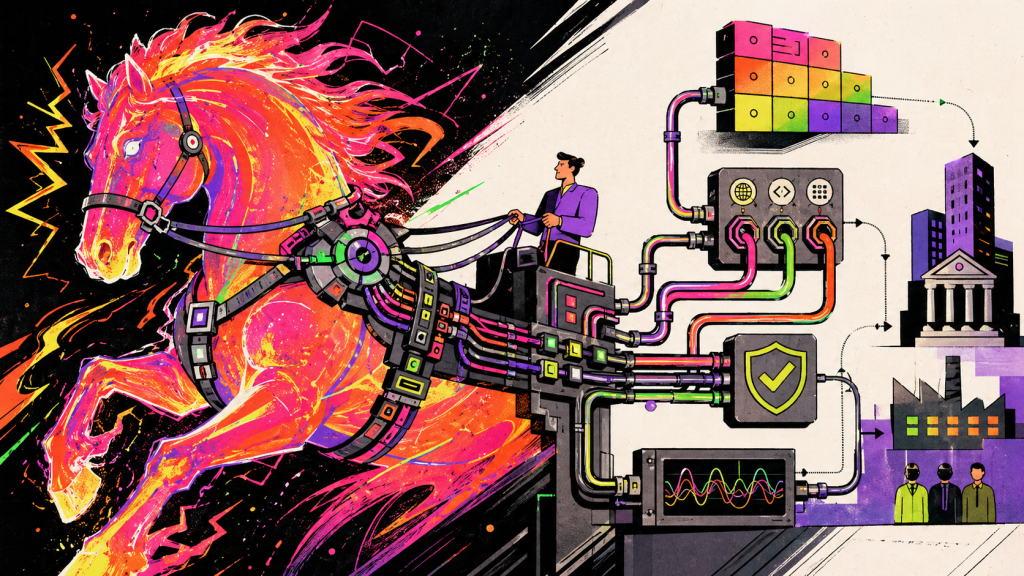

That is the point of harness engineering. A harness is the system around the model: tools, permissions, context, memory, state, orchestration, evaluations, approvals, logging, cost controls, and recovery paths. LangChain frames it cleanly as “Agent = Model + Harness,” with the harness covering the code, configuration, tools, middleware, execution flows, sandboxes, and memory management that make the model useful in real work. (LangChain)

The best metaphor I have seen is that the agent harness is becoming the new shell. The Unix shell gave humans a composable interface to the machine. The agent shell gives models a composable interface to enterprise systems, SaaS platforms, codebases, documents, workflows, and humans. Inference.sh makes this argument well: the old shell had one machine and one kernel, but the enterprise world now has cloud compute, SaaS tools, OAuth walls, distributed knowledge, and rented model inference. (inference.sh)

This matters because the model by itself does not know your business process. It does not know which actions require approval, which clients are sensitive, which data can cross boundaries, which exception is material, which workflow must be auditable, or which downstream system breaks when an enum changes. The harness is where enterprise judgment becomes executable.

Anthropic’s recent writing on Managed Agents is useful because it exposes the core tension. Harnesses encode assumptions about what models cannot do, but those assumptions can go stale as models improve. Anthropic’s answer is to stabilize the interfaces around sessions, harnesses, and sandboxes so the implementation can evolve underneath. (Anthropic)

That should land hard for enterprise technology leaders. If the harness is brittle, every model upgrade becomes a reintegration project. If the harness is well designed, better models become an accelerant rather than a disruption.

The Harness Is Not a Prompt Wrapper

The lazy version of enterprise AI is a chat window over a database. It demos well, then collapses when it meets permissions, exceptions, incomplete records, audit requirements, and angry process owners. The serious version starts with a different question: what machinery must surround the model so it can safely participate in the operating rhythm of the business?

A real enterprise harness needs at least five layers. It needs a context layer that retrieves the right data without flooding the model. It needs a tool layer that exposes actions through typed, versioned, permissioned interfaces. It needs a state layer that tracks what happened, what is pending, and what can be resumed. It needs a governance layer that handles approvals, policy checks, and security boundaries. It needs an observability layer that lets the company inspect decisions, tool calls, failures, cost, and quality.

This is why the Model Context Protocol matters, but also why MCP alone is not enough. MCP standardizes how LLM applications connect to external tools, data sources, and workflows. That gives enterprises a better integration substrate, but the strategic work is deciding which tools exist, which context is visible, which permissions apply, and which business process owns the outcome. (Model Context Protocol)

The same is true for OpenAI’s Agents SDK and Anthropic’s Managed Agents. OpenAI describes agents as applications that plan, call tools, collaborate across specialists, and maintain enough state to complete multi-step work. It also says the SDK is appropriate when your application owns orchestration, tool execution, approvals, and state. (OpenAI Developers) Anthropic’s Managed Agents, by contrast, offers a pre-built configurable harness and managed infrastructure for long-running work, with containers, stateful sessions, tool execution, and event history handled by the platform. (Claude)

That is the build versus buy decision in miniature. Buy the commodity runtime where it is not differentiating. Own the business harness where the process, judgment, data, and institutional memory create advantage.

The New Moat Is Not the Model

The uncomfortable truth is that most companies will not build a better foundation model. They will rent intelligence from a small number of providers, and those providers will keep getting better. Competing on raw model access is not a strategy.

The moat is the harness around your work. It is the encoded knowledge of how your company sells, builds, reviews, escalates, prices, supports, governs, and improves. It is your product operating model turned into software.

Latent Space’s discussion of harness engineering around coding agents makes this practical. The compelling part is not just “agents write code.” It is the system around them: fast build loops, observability, specs, skills, quality scores, and workflows that make the agent legible to the organization. The bottleneck shifts from tokens to human attention, which means the winning teams design systems that let agents operate with less review toil and more reliable feedback. (Latent.Space)

Martin Fowler’s coverage sharpens the engineering lens. A good harness uses feedforward controls to steer the agent before it acts and feedback sensors to help it self-correct after it acts. That means instructions, architecture rules, how-to guides, tests, linters, static analysis, review agents, runtime signals, and quality checks all become part of the steering loop. (martinfowler.com)

This is where enterprises should pay attention. Harness engineering is not only an AI discipline. It is a modernization discipline. It forces you to define the work clearly enough that a non-human actor can participate safely.

If your onboarding process, pricing workflow, engineering standards, or compliance review cannot be described, instrumented, evaluated, and resumed, the problem is not the model. The problem is that the business process was never truly productized.

Build, Buy, or Own?

The wrong answer is to build everything. The second wrong answer is to outsource the whole harness and pretend there is no strategic consequence.

You should buy or adopt standard layers where the market will move faster than you can. Sandboxed execution, generic tracing, common tool protocols, model routing, session infrastructure, and commodity connectors are not where most enterprises should burn scarce engineering capacity. OpenTelemetry’s work on GenAI and AI agent observability is a good example of a layer where standards matter because vendor-specific telemetry creates lock-in and makes performance harder to compare across frameworks. (OpenTelemetry)

You should own the parts that express how your business works. That includes your domain memory, your workflow contracts, your approval policies, your eval suites, your golden task sets, your tool permission model, your quality thresholds, and your operating metrics. Those are not generic plumbing. They are the executable shape of your company’s judgment.

Memory is a particularly strategic fault line. LangChain argues that harnesses and memory are tightly connected because the harness decides what context is loaded, what survives compaction, how interactions are stored, and whether long-term memory remains portable. A closed harness can create real lock-in if the enterprise loses visibility or control over its agent memory. (LangChain)

So the practical answer is hybrid. Buy the rails, own the route map. Use managed infrastructure where it accelerates delivery, but keep your process contracts, business memory, evaluation data, and governance policies portable enough that you are not trapped by a single vendor’s implementation.

The Executive Question

The question is not whether harness engineering is a moat or infrastructure. It is both, depending on the layer.

The base harness will become required infrastructure. Every serious company will need secure tool access, identity-aware retrieval, event logs, approvals, observability, evals, and cost controls. That will become as expected as CI/CD, SSO, and monitoring.

The differentiated harness will become a moat. The companies that win will encode their best people’s judgment into reusable skills, workflows, policies, and sensors. They will turn tribal knowledge into operational software. They will make their systems legible to agents and their agents accountable to the business.

That is the shift. AI strategy is no longer only about picking models or deploying copilots. It is about designing the harness that lets intelligence move through the enterprise without creating chaos.

The companies that understand this will not just automate tasks. They will industrialize judgment.